They were up late counting the votes in Brandon-Souris Monday night. That, despite the fact that the final poll of the campaign showed the Liberals with a commanding 29-point lead. In the end, the Liberal vote was 16 points lower and the Tory vote was 14 points higher. It would have been a shocking result, if Forum’s reputation wasn’t such that pundits were already chuckling about that poll long before the results rolled in.

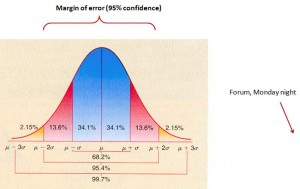

Before continuing on, I think it’s important to recognize just how far these numbers missed the mark. Some have talked about the “19 times in 20” disclaimer at the end of every margin of error, writing this off as (yet another) 1 in 20 rogue poll. I won’t turn this into a statistics seminar, but bell curves are such that most misses should be close misses. This weather website predicts that the average high in Brandon ranges from -15 to +13 ºC nine days out of ten in November – but that doesn’t mean there was a 1 in 10 chance scrutineers would be pulling out the Bermuda shorts on election day. That tenth day is usually going to be fairly close to the range.

[MATH WARNING – READ ON AT YOUR OWN PERIL]

The reality is, based on Forum’s quoted margin of error and sample size, they were off by around 6 standard deviations. And based on sampling theory, the odds of that happening in a poll from a truly random sample are non-existent. Somewhere in the neighbourhood of 150 million to 1. (So more likely than the Liberals winning Macleod, but still very unlikely)

OK, no more math. But I hope I’ve made the point that this isn’t the sort of thing that can happen by chance.

Forum President Lorne Bozinoff has his own theories:

He speculated that the difference between the final Brandon poll and the actual by-election outcome may have been that the Conservatives had a better “get out the vote” ground game than the Liberals. As well, he said some constituents who were angry about the perception of a fixed Tory nomination may have found they just couldn’t bring themselves to vote Liberal once they got into the ballot box.

I absolutely agree the ground game matters. However, you won’t find anyone in politics that believes the difference between the best ground game and no ground game at all is more than 5 or 6 points. Both parties were pumping resources and volunteers into Brandon, so every door got knocked – GotV may very well have been the reason the Tories won, but it doesn’t explain a 15-point swing. If it made that kind of difference, there’s no way the NDP would have swept Quebec last election.

I can somewhat buy last second switches playing a big role in the recent Alberta and BC elections, but it shouldn’t have been an issue in Brandon – especially when Forum showed Liberal support trending up. If that was what happened, then Bozinoff is basically saying that opinion polling is worthless. Because if the electorate is actually going to swing 15 points in under 24 hours, that means a poll showing the Tories at 30% on election day means they’ll finish anywhere from Kim Campbell territory (15%), right up to a majority government of historic proportions (45%).

Instead, what we’re dealing with appears to be flawed methodology. Bozinoff has admitted that some respondents may have been called for 3 consecutive polls, and that likely wouldn’t have happened unless the response rate was in the neighbourhood of 1% (typical for robo-polls, when you don’t do callbacks). Heck, Sunday being the Grey Cup, it may have been even lower. Sampling methodology only works if you assume survey respondents are similar to the public at large – otherwise, these polls are no more accurate than the “self selecting” click polls you see on websites, asking what you think of Miley Cyrus’ antics.

The obvious solution is more regulation on the polling industry, in terms of standards and disclosure. In the absence of that, it’s up to the media to show restraint when reporting what are clearly flawed robo-polls. Yes, they’re free. Yes, they make for an interesting “news” story – and bad polls make for an especially interesting “news” story because they run counter to the common wisdom.

Polls provide information, and information is a valuable tool. However, passing off faulty information as accurate, and giving it what is clearly not a “real” margin of error is dangerous. These freebie polls have shown themselves to be no more useful than “word on the street” anecdotes, and they should not be given any more credibility than that.

Please note – part of this issue, as noted by John Wright, is a lack of disclosure. So, in the interests of full disclosure, I should remind readers that I work at a polling company, albeit one where “robocalls” are considered a four letter word.

2 responses to “Margin of Error”

A couple thoughts: first, while we like to think of it as a balanced bell curve, it will actually likely skew to one side or the other. (E.g. if we say the Rhinos will get 2%, +/- 5%, we don’t mean they can get -3% and still be within the “margin of error” (e.g. one S.D.). But that’s probably not important; if it were, you would have included it.

More important is the underlying question: do those responding to the poll actually intend to vote? What if they intended to vote, but simply forgot to do so, with no national media saturation for a by-election? There are problems with polling today, and unless we dig deep we won’t find the answers.

That’s a good point – in reality, the margin of error is going to be lower when you get to smaller of larger percentages (the figures quoted always assume a “real” probability of 50%). That makes a certain amount of intuitive sense since, after all, you can’t really have a +/- 5% on a 2% number.

Since we’re dealing with percentages around 50% here, it’s not a huge issue.

Your second point hits on the heart of the issue. The holy grail on political polling is a “turnout model” that can predict if people will actually vote.

It’s something parties are experimenting with. Even though I don’t live in Toronto Centre, I somehow found myself on a call list for Toronto Centre, so I got a robopoll before the vote asking me if I intended to vote in 3 different ways, and then a follow-up call asking me if I did in fact vote, what I based my decision on, etc. Obviously someone is experimenting with turnout models, to try figure out what makes voters click. That’s smart.